ARTIFICIAL INTELLIGENCE

🌎 The 5 Critical AI Bias Detection Skills Every Professional Needs

src:gpt

While everyone rushes to master ChatGPT prompts and automation workflows, a critical skill gap is putting professionals at massive risk. AI bias detection isn't just a nice-to-have it's professional insurance in an AI-driven economy.

Recent lawsuits against companies like Workday and HireVue reveal how AI systems discriminate against qualified candidates. Meanwhile, a 2025 Confluent study found 70% of UK executives now second-guess their own judgment when AI disagrees with them.

Here are the five essential bias detection skills you can start using immediately:

🔍 Prompt Auditing

Test your AI systems with slight demographic variations to reveal hidden bias. Ask for "leadership candidates," then ask for "female leadership candidates" and compare the criteria. If the AI suddenly mentions "communication skills" only for women, that's bias. This works for any AI tool hiring systems, performance reviews, or recommendation engines.

✅ Result Verification

Never trust AI recommendations without checking them against real-world data. If an AI flags someone for "reliability concerns," check their actual attendance record. AI systems often flag people based on patterns that don't match individual reality. Always ask: does this assessment reflect actual performance?

🧠 Context Awareness

Know when NOT to use AI for critical decisions. Avoid AI for high-stakes personnel choices, situations requiring individual accommodations, or when cultural context matters. AI excels at pattern recognition but fails at human complexity. Sarah Chen was blocked from promotion when HireVue couldn't process her ASL interpreter as normal communication.

👤 Human Override Protocols

Build manual review checkpoints into any AI-powered process. Require second opinions for AI hiring recommendations and maintain clear escalation paths. Derek Mobley was rejected at 1:50 AM by an algorithm no human override existed to catch systematic discrimination.

📋 Bias Documentation

Track and report AI failures systematically. Screenshot discriminatory results, document patterns you notice, and report issues to vendors. Your documentation could be evidence in future lawsuits. Create an incident tracking system and share findings with colleagues.

Why This Matters Now

These aren't theoretical skills they're practical career protection. With 27% of UK executives using AI for hiring and firing decisions, bias-blind professionals risk making discriminatory choices that destroy reputations and trigger lawsuits.

Derek Mobley's class-action lawsuit against Workday seeks damages for millions of discriminated candidates. Sarah Chen's ACLU case against Intuit could set legal precedent. These cases prove the stakes are massive.

Getting Started

Choose one AI tool you use regularly and audit it this week. Test it with demographic variations, verify one recommendation against real data, or document one concerning result. Small steps build bias awareness that could save your career.

The AI economy rewards those who leverage automation while maintaining human judgment. These five skills ensure you're using AI responsibly, not blindly.

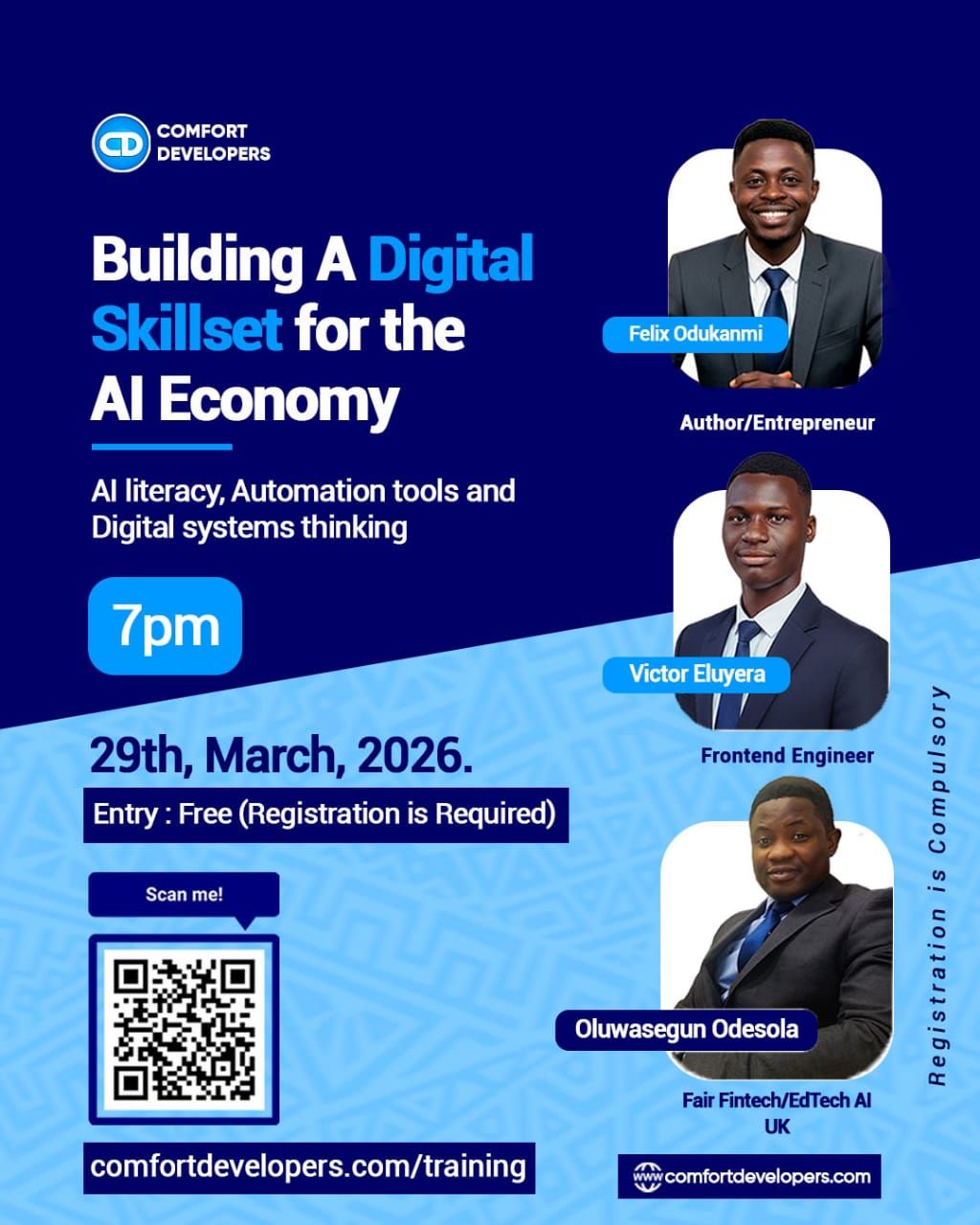

Speaking Engagement: I'll be presenting on this topic at Comfort Developers' "Building A Digital Skillset for the AI Economy" event, March 29th at 7PM.

"Let's Make Algorithms Work for Everyone. Human-in-the-Loop is a Must."

Register Here: Register free here