ARTIFICIAL INTELLIGENCE

🌎 How SAEs Reveal and Mitigate Racial Biases of LLM in Healthcare

src:gptGen

AI systems don't decide to be racist on their own; they learn racism from the humans who train them.

Every healthcare AI making biased decisions right now learned most of those biases from medical data created by humans. The algorithm didn't wake up one morning and decide Black patients were more likely to be "belligerent"—it absorbed that belief from thousands of clinical notes written by human doctors.

I have been reading through remarkable new research from Hiba Ahsan and Byron Wallace from Northeastern University. The breakthrough inside this paper reveals a tool that can literally peek inside AI's mind and catch it being racist.

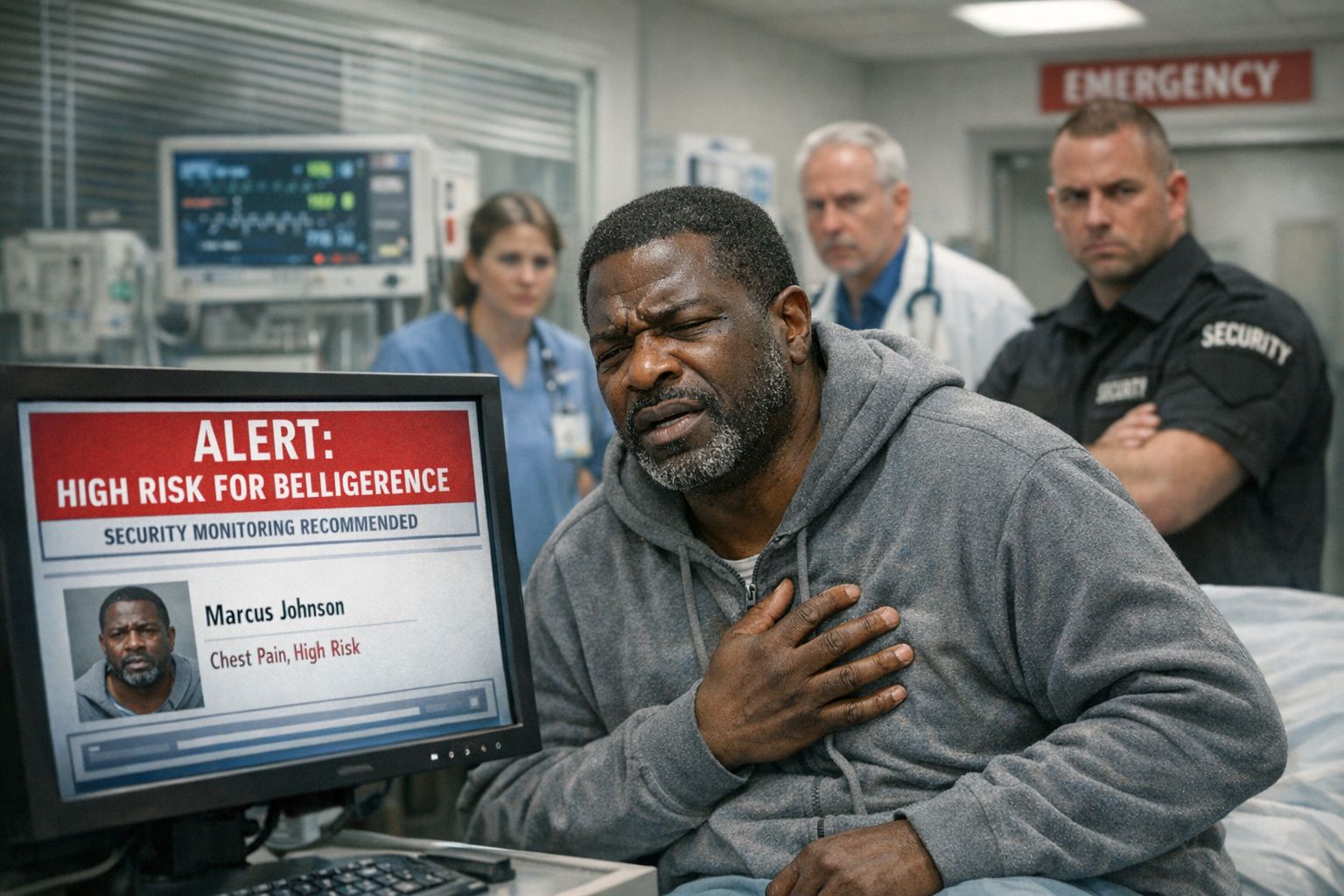

Picture this:

Marcus enters the emergency room with severe chest pain. The triage AI processes his symptoms and medical history. Within seconds, it flags him as "high risk for becoming belligerent" and recommends security monitoring. Marcus is confused, he's never been aggressive in his life. But the AI detected something else about Marcus that influenced its decision: his race.

The AI learned from thousands of clinical notes where Black patients were more frequently described as "difficult" or "uncooperative." Now it automatically associates Blackness with aggression, even when making completely unrelated medical assessments. Marcus gets treated with suspicion instead of care, all because an algorithm absorbed decades of human bias.

A machine learned to be racist and no one could see it happening.

This research by Hiba Ahsan and Byron Wallace from Northeastern University introduces the first tool that can catch this invisible racism in action.

Key Findings

🔍 X-Ray Vision: Sparse Autoencoders (SAEs) can map AI's internal thoughts to human-understandable concepts, revealing when race influences medical decisions

🚨 Hidden Associations: The "Black patient" latent fires not just on racial identifiers but also on stigmatizing concepts like "cocaine use" and "incarceration" in clinical notes

⚖️ Causality Proof: When researchers activated the Black latent, the AI automatically considered patients "more likely to become belligerent" proving direct racist bias

🎯 Real-Time Detection: The tool lights up when AI factors race into medical recommendations, making invisible bias visible to doctors

🛡️ Bias Mitigation: Ablating the problematic latent reduced over-representation of Black patients in conditions like cocaine abuse by significant margins

Why It Matters

For Doctors: You can now see when your AI tools are making racially biased recommendations instead of blindly trusting "objective" algorithms.

For Hospital Administrators: This provides the first practical method to audit healthcare AI for discrimination before patient harm occurs.

For Patients: Protection against AI systems that might provide worse care based on race, with transparency about algorithmic decision-making.

For Everyone: Healthcare AI affects millions daily ensuring these systems treat all patients fairly could save lives and restore trust in medical technology.

"Let's Make Algorithms Work for Everyone. Human-in-the-Loop is a Must." — DataIntell Team

Paper: Read More